As many of you have probably already read elsewhere there has been a large iceberg calving event in the Antarctic recently at the Larsen C ice shelf at the east side of the Antarctic peninsula. The interesting thing about this is much less the event itself (yes, it is a large iceberg and yes, it probably is part of a general decline of ice shelves at the Antarctic Peninsula over the last century or so but no, this is not in any way record breaking or particularly alarming if you look at things with a time horizon of more than a decade). The interesting thing is more the style of communication about it. Icebergs of this size and larger occur in the Antarctic in intervals of several years to a few decades. It is known that many of the large ice shelf plates show a cyclic growth pattern interrupted by the calving of large icebergs like this one, sometimes on a time scale of more than fifty years. This is the first such event that was closely tracked remotely. In the past people usually noticed such events a few days or weeks after they happened while in this case there was a lot of anticipation with the development of cracks in the ice and for months we kind of had frequent predictions of the calving being expected any day now – or in other words: A lot of people apparently were quite wrong in their assumptions on how exactly such a calving would progress.

I am not really that familiar with the dynamics of ice myself so i won’t analyze this in detail. The important thing to keep in mind is that ice at the scale of tens to hundreds of kilometers and under the pressures involved here behaves very differently from how you would expect it to behave in analogy to ice on a lake or a river you might observe up close.

The other interesting thing about this iceberg calving is that it happened in the middle of the polar night. Since the Larsen C ice shelf is located south of the Antarctic Circle this means permanent darkness during this time. So how do you observe an event in the dark via open data satellite images?

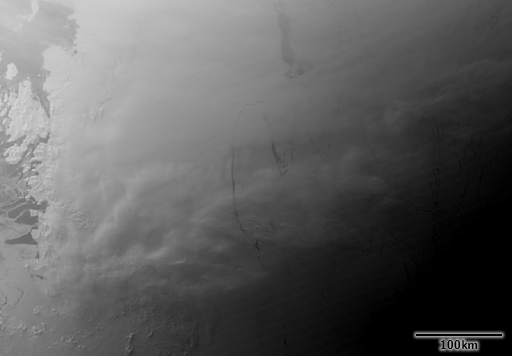

One of the most interesting possibilities for nighttime observation is the Day/Night Band of the VIIRS instrument. This is a visual/NIR range sensor capable of recording very low light levels. This is best known from the nighttime city lights visualizations you can find – which feature artificial colors.

This sensor is capable of recording images illuminated by moonlight or other light sources like the aurora. So we got some of the earliest images of the free floating iceberg from VIIRS.

This image uses a logarithmic scale, actual radiance varies across the shown area by about three orders of magnitude.

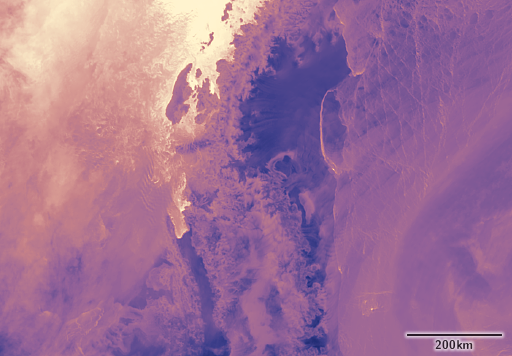

Another much older and better established way for nighttime observation is in the thermal infrared. In the long wavelength infrared the earth surface is emitting light based on its temperature and this emission is nearly the same during the day and night. Clouds emit in the long wavelength infrared as well which makes thermal infrared imaging an attractive and widely used option for 24h weather observations by weather satellites.

Thermal infrared data is available from the previously mentioned VIIRS instrument but also from MODIS and Sentinel-3 SLSTR. Here an example from the area from SLSTR.

During the polar night the highest temperature occurs at open water surfaces like on the west side of the Antarctic peninsula on the upper left and the lowest temperatures in this area occur on the ice shelf surfaces and the low elevation parts of the glaciers on the east side of the Antarctic peninsula. The outline of the new iceberg is well visible because of the relatively warm open water and thin ice in the gap between the iceberg and the ice shelf.

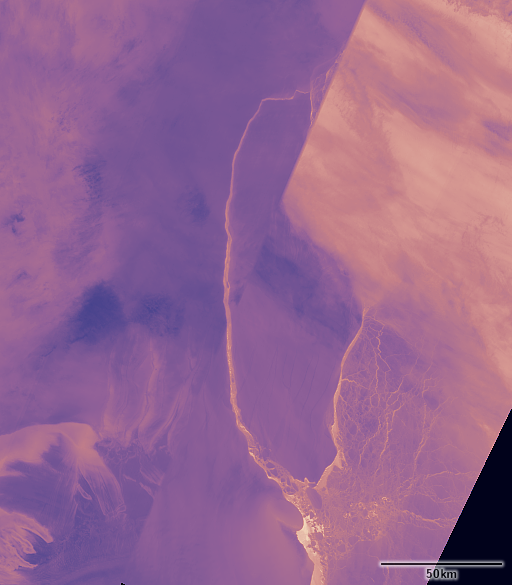

Thermal infrared images are also available in significantly higher resolution from Landsat and ASTER images. Landsat is not regularly recording nighttime images in the Antarctic but there have been images recorded recently in the area because of the special interest in the event. Here an assembly of images from July 12 and July 14.

You can see there is a significant amount of clouds, especially in the scenes on the right side obscuring the ice features under it. Here a magnified crop showing the level of detail available.

ASTER so far does not offer any recent images of the area. In principle ASTER currently provides the highest resolution thermal imaging capability – both in the open data domain and in the world of publicly available systems in general – though there are probably classified higher resolution thermal infrared sensors in operation.

So far everything shown has been from passive sensors recording natural reflections and emissions. The other possibility for nighttime observations is through active sensors, in particular radar. This has the added advantage that it is independent of clouds (which are essentially transparent at the wavelengths used).

Radar however is not initially an image recording system. Take the classical scope of a navigational radar system for example – the two-dimensional image is built by plotting the signal runtime of the reflected signal received from the different directions. Essentially the same applies to radar data from satellites.

Satellite based radar systems that produce open data products currently exist on the Sentinel-3 and Sentinel-1 satellites. Sentinel-3 features a Radar altimeter capable of measuring the surface elevation in a small strip directly below the satellite. This is not really of interest for the purpose of observing an iceberg calving.

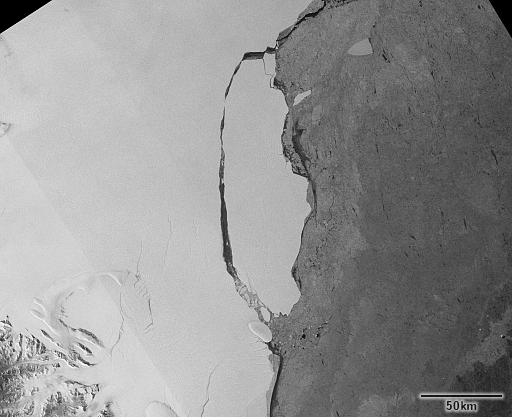

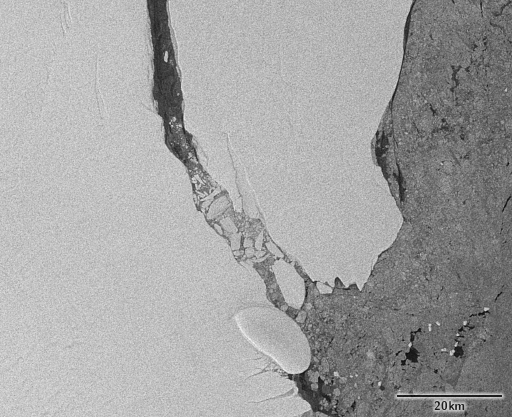

Sentinel-1 on the other hand features a classical imaging radar system. The most widely used data product from Sentinel-1 is the Ground Range Detected data which is commonly visualized either in form of a grayscale image or a false color image in case several polarizations are combined. Here is an example of the area under discussion.

Note while this looks like an image viewed from above it is not. The two-dimensional image is created by interpreting run time as distance (which is a quite accurate assumption) and then mapping the distance to a point on an ellipsoid model of earth (which is an extreme simplification). In other words: the image shows the radar reflection strength at the position where it would have come from if the earth surface was a perfect ellipsoid. For the ice shelf this is not such a big deal since it raises no more than maybe a hundred meters above the water level which is very close to the ellipsoid but you should not ever try to derive any positional information directly from such an image on land.

You can also see that noise levels in the radar data tend to be much higher than in optical imagery. After all we are talking here about a signal that is sent out across several hundred kilometers, is then reflected and a small faction of it travels back across several hundred kilometers again to be recorded and analyzed. Overall extracting quantitative information from radar data is usually much more difficult than from optical imagery. But the great advantage is of course it is not affected by clouds.

Since this might be confusing for readers also looking at images of this event elsewhere – images shown here are all in UTM projection and approximately north oriented.

July 23, 2017 at 22:36

Just a minor point concerning the noise in a radar image (or “Radar” or “SAR”, depending on one’s preference…).

Yes, the images look “noisy”, but this is not due to the large distance between the satellite and the ground target, at least for the overwhelming vast majority of the targets typically seen in a radar scene. Instead, radar is a coherent imaging mechanism, rather like a laser, so within the detected image there are areas of constructive and destructive interference that are visible. In the radar community this is called “speckle”, which is also the term used in optical coherent imaging (from which the field draws a great deal of its development).

Speckle is not “noise” in the conventional sense; it is part of the imaging structure. You can’t get rid of it, although you can ameliorate its effects by spatial averaging or by trying to estimate the underlying backscatter using a model of some type. It follows, therefore, that processing of the radar scene requires a different approach for standard operations such as edge detectors and so on. Using classical methods from optical processing is bound to end in a poor result, hence the perception that processing the radar scene is more difficult than other forms of imagery. If one takes into account that speckle is part of the imaging process, then it’s somewhat more straightforward – not trivial, but more straightforward.

I enjoy reading your posts. Keep it up !

July 23, 2017 at 23:25

Well – obviously you can go and redefine language and say this is not noise, it’s speckle. And indeed my explanation about signal intensity and distance could be construed to mean that the noise in radar imagery is the same as shot noise in optical images, which is not the case of course.

But in the end you are fooling yourself if you say: this is no real noise, it is speckle, good noise so to speak. Like any other form of noise it interwoven into the actual information you are interested in and limits what can be extracted from the data in terms of information because it is uncorrelated with what you usually want to extract from the data. And it is not – as your comment might be read to imply – a mere rendering artefact based on how the data is visualized.

July 28, 2017 at 02:10

At no stage did I say or imply “this is no real noise, it is speckle, good noise so to speak”. Nor is this redefining the language concerning the terms, nor even that it is a rendering artifact.

The point about speckle is that it does not behave like conventional noise in optical imagery. It is multiplicative in amplitude and has quite a specific distribution, which can be altered depending on the processing approach. So, conventional approaches to standard operations such as edge detection, or other filtering operations don’t always work very well. Early processing of radar imagery used standard methods and achieved poor results (poor edge fidelity, for instance). Modern software is more generalised, and can handle this type of situation.

I will take issue with another statement you have written. You have said:

“You can also see that noise levels in the radar data tend to be much higher than in optical imagery. After all we are talking here about a signal that is sent out across several hundred kilometers, is then reflected and a small faction of it travels back across several hundred kilometers again to be recorded and analyzed.”

This is nonsense. The noise component you are referring to, which is from “…a signal that is sent out across several hundred kilometers, is then reflected and a small faction of it travels back across several hundred kilometers again…” is an additive component of the reflected signal. This additive component represents the smallest signal one could expect to receive. It’s typically referred to as the noise equivalent sigma-naught (NES0), and it is well below the typical dynamic range. Speckle is quite different, and is amplitude-dependent (i.e. multiplicative).

I don’t envisage providing more replies here on this topic.

July 28, 2017 at 10:07

From my point of view our disagreement mostly seems to come from a different understanding on what noise in images is. Your idea seems to be that noise is something very specific in origin and based on this interpretation you say Speckle is not noise in the conventional sense – which is probably a correct statement under this presumption. But in my eyes the term noise is not tied to a specific origin – which is why i think limiting noise to noise of a specific origin amounts to redefining language.

In optical images you have read noise of the sensor – which is of constant amplitude, so an additive component, and which is probably what you have in mind with conventional noise. And there is also shot noise which i already mentioned which is proportional to the square root of the signal – so somewhere between an additive and a multiplicative component so to speak. But both of these are often not the most significant components of noise in optical images you practically need to deal with.

But all of this is ultimately mostly talk about semantics. The real issue should be the question how limiting noise (or speckle if you want to make that distinction) is in practical use of the data. This is something that can be looked at completely without considering the terminology. And if i look at analysis of optical image data and imaging radar data in the literature i find results of radar data interpretation much more frequently being speckle/noise limited than in case of optical imagery. Yes, this is a subjective observation surely based on much too limited data but i would be glad to be pointed to any examples where analysis of radar imagery is not speckle/noise limited.

I am probably going to write in more depth about the subject of noise in satellite data in a future post but this will likely concentrate on optical data. While for optical image processing and analysis a lot of Open Source tools exist which allow readers to reproduce my observations this is currently extremely scarce for radar data. This is both a serious drawback for me – i would need to invest time and money into proprietary tools or into own implementations of algorithms – and for my readers.