When i recently wrote about the start of public distribution of Sentinel-3 data i indicated that i was going to write about this in more detail once SLSTR data is also available. This has happened several weeks ago already but it took somewhat longer to work through this than originally anticipated – although based on the experience with Sentinel-2 this is not really that astonishing.

This first part is going to be about the basics, the background of the Sentinel-3 instruments and the form the data is distributed in. More specific aspects of the data itself will follow in a second part.

On Sentinel-3

I already wrote a bit about the management side of Sentinel-3 when reporting on the data availability. Here some more remarks from the technical side. While Sentinel-1 and Sentinel-2 are both satellite systems with a single instrument Sentinel-3 features multiple different and independent sensors. Sentinel-3 is quite clearly a partial follow-up to the infamous Envisat project – something that is not too prominently advertised by ESA because it is a clear reminder of that corpse still lying around in the basement above out heads. Sentinel-1 could be understood to be a successor for the ASAR instrument on Envisat while most other Envisat sensors are reborn on Sentinel-3. I will here only cover the OLCI and SLSTR instruments which are related to the MERIS and AATSR systems on Envisat.

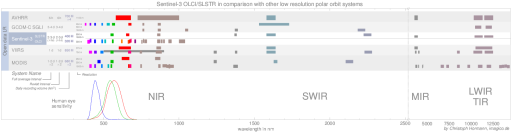

OLCI and SLSTR are included in my recent satellite comparison chart – here an excerpt from that in comparison with other similar systems:

All of these are on satellites in a sun synchronous polar orbit and record images continuously or at least for the dayside in resolutions between about 1000m and 250m. AVHRR is still running on four satellites but is to be preplaced in the future with VIIRS systems (which currently runs on one prototype satellite) GCOM-C SGLI is still in planning and not yet flying. Apart from spectral and spatial resolution the most important characteristic of such satellites is the time of day they record. Equator crossing time for Sentinel-3 is 10:00 in the morning which is closest to MODIS-Terra at about 10:30 (same is planned for GCOM-C). Since VIIRS is – both on Suomi NPP and on future JPSS satellites – going to cover a noon (13:30) time frame it could be that if MODIS-Terra ceases operations – which is not unlikely to happen with more than 16 years age – Sentinel-3 will be the only public source for morning time frame imagery in this resolution class.

That much for context. Now regarding the instruments: In short – OLCI is a visible light/NIR camera with 300m resolution in all spectral bands and SLSTR covers the longer wavelengths, starting from green, with 500m resolution (for visible light to SWIR) and 1000m resolution (for thermal IR). This somewhat peculiar division with overlapping spectral ranges is coming from the MERIS/AATSR heritage.

The most unusual aspect about the spectral characteristics is that OLCI features quite a large number of fairly narrow spectral bands at a relatively high spatial resolution of 300m.

OLCI records a 1270km wide swath. This means it takes three days to achieve full global coverage. For comparison MODIS covers 2330km and takes two days for full global coverage while VIIRS with 3000km coverage achieves full daily coverage. With the second Sentinel-3 satellite planned for next year OLCI will offer a combined coverage frequency comparable to MODIS. That being said OLCI is none the less very different because it looks slightly sideways. To cover 2330km from 705km altitude MODIS has a viewing angle from 55 degrees to both sides. OLCI on Sentinel-3 looks about 22 degrees to the east and 46 degrees to the west. In other words: it faces away from the morning sun coming from the east to avoid sun glint. This also means the average local time of the imagery is somewhat earlier than what is indicated by the 10:00 equator crossing time.

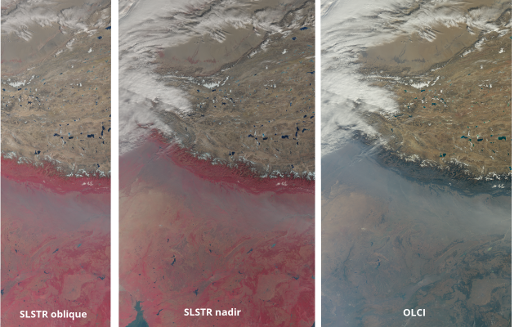

The SLSTR instrument is even more peculiar. It offers two separate views, one similar and overlapping with the OLCI view and 1400km wide, the other is more narrow (only 740km wide), not tilted to the west like the others but tilted backwards along the orbit of the satellite.

So what you get from OLCI and SLSTR together is three separate data sets:

- The OLCI imagery, 1270km wide, view tilted 12.6 degrees to the west

- The SLSTR nadir images, 1400km wide, likewise tilted to the west (so the ‘nadir’ is somewhat misleading) and fully overlapping with the OLCI coverage. The additional extent goes to the east so the SLSTR view is somewhat less tilted.

- The SLSTR oblique/rear images, 740km wide, not tilted sideways

Here a visual example how this looks like (with SLSTR in classic false color IR):

Getting the data

But we are getting ahead of ourselves here. The publicly available Sentinel-3 data is available on the Sentinel-3 Pre-Operations Data Hub. What i am writing about here is the level 1 data currently released there. I am also not discussing the reduced resolution version of the OLCI data, just the full resolution OLCI and SLSTR data.

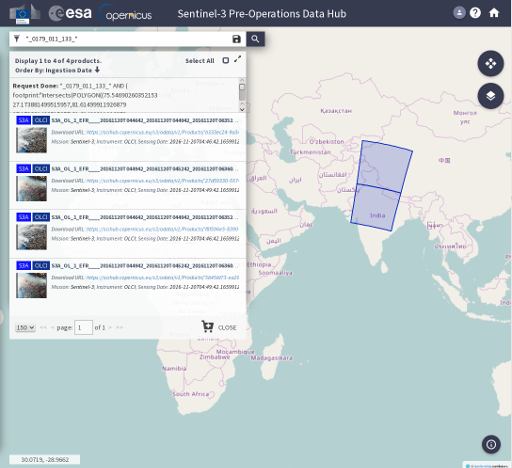

When you grab an OLCI data package from there it has a file name like this:

S3A_OL_1_EFR____20161120T044642_20161120T044942_20161120T063521_0179_011_133_2340_SVL_O_NR_002.zip

If you have read my Sentinel-2 review that probably looks painfully familiar. Excessively long, three time stamps, just in different order. First two time stamps are recording dates, the third one is processing. The footprints shown in the download interface like here:

are pretty accurate – at least after they fixed the obvious flaws when footprints crossed the edges of the maps after the first weeks. Don’t get confused when you frequently get every result twice (with different UUIDs but otherwise identical).

In the above file name i marked the components that are actually really useful in color. That is the date of acquisition, the relative orbit number and the along-track coordinate. The way the data is packaged is quite similar to Landsat (which is somewhat ironic since Sentinel-2 data is packaged much less like Landsat). The relative orbit number is like the Landsat path – just in order of acquisition rather than spatial order, the along-track coordinate is a bit like the Landsat row. Cuts along the track seem at roughly the same position usually and are made so that individual images have roughly square dimensions – but not precisely so numbers vary slightly from orbit to orbit. A number of other things are good to know when you look for data:

- OLCI data is only recorded for the day side and only for areas with sun elevation above 10 degrees (or zenith angles of less than 80 degrees). This is quite restrictive, especially if you consider the early morning time frame recorded. Right now (end November) that limit is at about 60 degrees northern latitude. MODIS records to much lower sun positions (at least 86 degrees zenith angle AFAIK).

- SLSTR is recorded continuously so you have descending and ascending orbit images. Only the thermal IR data is really useful for the night side of course.

- The packages have no overlap in content.

- Due to the view being tilted to the west in the descending orbit, i.e. to the right, images of both OLCI and SLSTR cover the north pole but not the south pole. The southern limit of OLCI coverage is at about 84.4 degrees south, SLSTR coverage ends at 85.8 degrees.

In the example shown the next package along the orbit is

S3A_OL_1_EFR____20161120T044942_20161120T045242_20161120T063600_0179_011_133_2520_SVL_O_NR_002.zip

– identical except for the times and the along-track coordinate.

Package content

When you unpack the zip you get a directory with the same base name and a ‘.SEN3’ at the end. When we look inside we might be positively surprised since we find only 30 files. For SLSTR that increases to 111 but still this is quite manageable compared to Sentinel-2. Of course – like with Sentinel-2, since the data is in compressed file formats packaging everything in a zip package is still very inefficient.

Image data is in NetCDF format. GDAL can read NetCDF and it can also read Sentinel-3 NetCDF but at least for the moment this is limited to basic reading of the data, any higher level functionality you need to take care of by hand. I assume GDAL developers will possibly in the future add specific support for the specific data structures of Sentinel-3 data but that might take some time.

What you have in the OLCI package is

- One xml file

xfdumanifest.xmlwith various metadata that might be useful, including for example also a footprint geometry. - 21 NetCDF files with names of the form

Oa??_radiance.ncwith ‘??’ being the channel number for the 21 OLCI spectral channels. This contains the actual image data. - Eight additional NetCDF files with various supplementary data.

It is great to have only 30 different files here so i am not complaining but the question is of course why they do not put all the data in one NetCDF file and save the need for a zip package? This is how MODIS data is distributed for example (in HDF format but otherwise same idea). I am just wondering…

In the SLSTR packages there is a bit more stuff but overall it is quite similar:

- One xml file

xfdumanifest.xmlwith metadata. - 34 NetCDF files for the image data of the 9+2 spectral bands with names of the form

S?_radiance_[abc][no].ncor[SF]?_BT_i[no].ncwith ‘?’ being the channel number. The ‘n’ or ‘o’ at the end indicates the nadir or oblique view as described above. ‘a’, ‘b’ or ‘c’ indicates different redundant sensors for the SWIR bands or a combination of them. - For each of the image data files there is a quality file with ‘quality’ instead of ‘radiance’ or ‘BT’ in the file name.

- The other files are additional NetCDF packages with supplementary data – like with OLCI but different file names. Most of them are in different versions for the n/o and a/b/c/i variants.

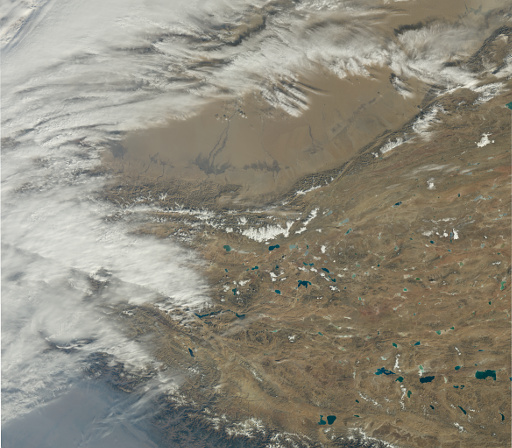

Now if you pick the right ones from the 21 OLCI image data files, assemble them into an RGB image, which is fairly strait away with GDAL, you get – with some color balancing afterwards – something like this:

That might look pretty reasonable at the first glance but it is actually quite strange that the data comes in this form. More on that and other details on the data will come in the second part of this review.

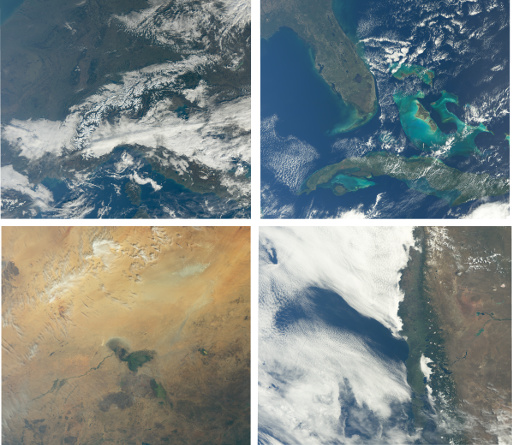

While you wait here a few more image examples:

March 29, 2017 at 15:04

You write “Now if you pick the right ones from the 21 OLCI image data files, assemble them into an RGB image, which is fairly strait away with GDAL, you get – with some color balancing afterwards – something like this”

Could you please explain how you managed to do this with GDAL. For me it doesn’t work, I cannot load the datasets with gdal

$ gdalinfo –version

GDAL 2.1.3, released 2017/20/01

$ gdalinfo Oa08_radiance.nc

Warning 1: No UNIDATA NC_GLOBAL:Conventions attribute

gdalinfo failed – unable to open ‘Oa08_radiance.nc’.

March 29, 2017 at 15:42

Hello Rouven,

there seems to be some incompatibility introduced in GDAL 2.x that prevents it from opening the files. But GDAL 1.x works fine. You will however likely need to set GDAL_NETCDF_BOTTOMUP – see http://www.gdal.org/frmt_netcdf.html

Yes, maybe not really strait away – but that is the kind of problem you get used to…

January 30, 2018 at 15:26

With GDAL 2.2.2 it works again.

$ gdalinfo –version

GDAL 2.2.2, released 2017/09/15

But GDAL is painfully slow to read the original files. GDAL performs much better when the files are first copied using nccopy with deflation level = 0.

$ nccopy -d0 Oa08_radiance.nc Oa08_radiance.nc.temp

January 30, 2018 at 15:44

Correct, the GDAL 2.x problems seem to be fixed now.

I have not checked but i kind of doubt nccopy is that much faster than GDAL in decompressing the data. Ultimately this is rarely going to be a bottleneck as long as you make sure you decompress only once and not every time you access the data. If you do this with GDAL (converting to uncompressed GeoTIFF for example) or with nccopy probably does not make much of a difference.

And of course in the end Sentinel-3 data comes with the same double compression nonsense as Sentinel-2 (i.e. compressed image data formats inside a compressed ZIP file).