I have in the past commented critically on the vector tiles hype that seems to engulf and dominate much of the digital cartography sector these days. What i dread in particular is the really saddening effect this trend seems to have in some cases on cartographic ambition of map producers – the most iconic case example being maybe swisstopo – whose traditional topographic maps are by many considered one of the pinnacles of high quality map design with uncompromising cartographic standards and ambitions – and which now sinks to the level of presenting something as crude as this as a serious contribution to their digital services.

One of the core issues of this trend in my eyes has been that it has been fueled largely by economic factors (in other words: cost cutting of map providers) while practically across the board being executed on the engineering level in an highly superficial and sloppy way. If you look at software development projects in the domain you can almost universally see the short term economic goals in the foreground and a lack of capacity and/or willingness to thoroughly analyze the task from ground up and design and develop a solution with a decent level of genericity and scalability and to invest the time and resources a decent solution calls for.

But while i am confident that this critique is justified i realize that for many people working within the vector tiles hype so to speak it is hard to follow these critical considerations without specific examples. And i also want to better understand myself what my mostly intuitive critical view of the vector tiles trend and the technological developments around it is actually caused by and better identify and where possible quantify the deficits.

Starting at the basics

What i will therefore try to do is exactly what software engineers in the domain seem to do much too rarely: Look at the matter on a very fundamental level. Of course i anticipate that there are those who say: These tools are meant to render real world maps and not abstract tests. Why should we care about such? Well – as the saying goes: You have to crawl before you can learn to walk. To understand how renderers perform in rendering real world maps you need to understand how they work in principle and for that looking at abstract tests is very useful. That you rarely see abstract tests like the ones shown here being used and discussed for map rendering engines in itself is indicative of a lack of rigorous engineering in the field IMO.

And i will start at the end of the data processing and rendering toolchain – the actual rendering – because this is where the rubber meets the road so to speak. We are talking about map rendering here which ultimately always means generating a rasterized visual representation of the map. And despite some people maybe believing that the vector tiles trend removes that part of the equation it of course does not.

In this blog post i will look at the very fundamental basics of what is being rendered by map renderers, that is polygons. The most frequent visual elements in maps that rendering engines have to render are polygons, lines, point symbols and text. And a line of a defined width is nothing different from a long and narrow polygon. Text contains curved shapes but they can with controllable accuracy be converted into polygons. Not all renderers do this in practical implementation, some have distinct low level rendering techniques for non-polygon features and there are of course also desirable features of renderers that do not fit into this scheme. So i am not saying that polygon rendering ability is the only thing that matters for a map renderer but it is definitely the most basic and fundamental feature.

As you can see if you scroll down i only look at simple black polygons on white background examples and ignore the whole domain of color rendering for the moment (color management in map renderers or the lack of it is another sad story to write about another day). Because of the high contrast this kind of test very well brings out the nuances in rendering quality.

The difficulties of actually testing the renderers

I am looking specifically at client side map renderers – that is renderers designed to be used for rendering online maps on the device of the user (the client). This creates several difficulties:

- They typically run in the web browser which is not exactly a well defined environment. Testing things on both Chromium and Firefox on Linux i observed noticeable differences between the two – which however do not significantly affect my overall conclusions. The samples shown are all from Chromium. I will assume that the variability in results to other browsers and other platforms are similar. Needless to say that if the variability in results due to the client environment is strong that is a problem for practical use of these renderers on its own. After all as a map designer i need predictable results to design for.

- They are only the final part of an elaborate and specialized chain of data processing designed for cost efficiency which makes it fairly tricky to analyze the renderer performance in isolation without this being distorted by deficits and limitations in the rest of the toolchain.

I managed to address the second point by running the first tests based on GeoJSON files rather than actual vector tiles. All three renderers evaluated support GeoJSON input data and this way you can reliably avoid influences of lossy data compression affecting evaluation of the rendering performance (which is – as i will explain later – a serious problem when practically using these renderers with actual vector tiles).

The test data i created, which you can see in the samples below, is designed to allow evaluating the rendering performance while rendering polygons.

Test patterns used for evaluating polygon rendering

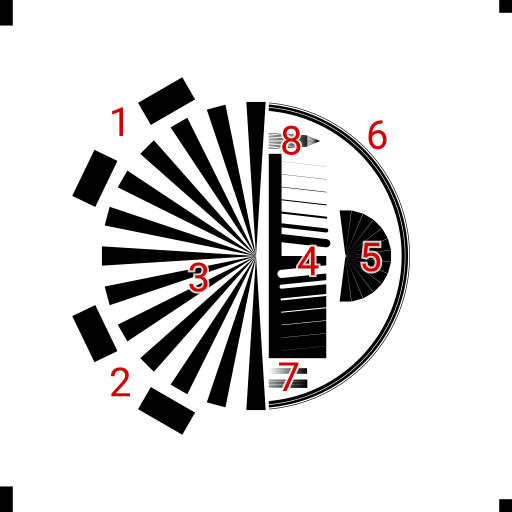

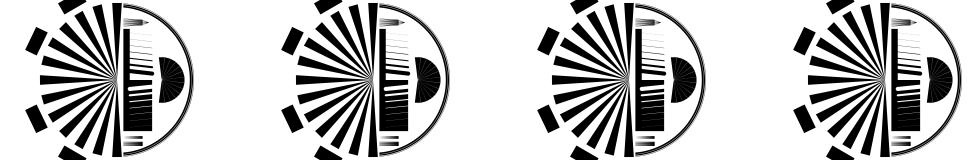

I will quickly explain the different components

- Adjacent polygon test with four touching rectangles that are a single multipolygon geometry (which is technically invalid – but all renderers accept this). At two different rotations.

- Adjacent polygon test with four touching rectangles that are separate geometries. This is the classic case where AGG produces artefacts (as explained in detail elsewhere).

- A Siemens star like test pattern showing resolution, aliasing and color mixing biases.

- Dent pattern to show lines of various width both positive (black on white) and negative (white on black) features. Shows any bias between those two cases, artefacts in narrow feature rendering and step response at a strait polygon edge in general.

- Inverse Siemens star like pattern with narrow gaps of variable width testing geometric accuracy in rendering and geometry discretization artefacts.

- Test for rendering uniformity on circular arcs. Contains both a narrow black line as well as a white gap of same size for checking equal weight of those.

- Fine comb gradients testing color mixing and aliasing artefacts.

- Fan comb for testing aliasing artefacts.

The test subject

I tested the following client side renderers

- Maplibre GL – the FOSS fork of Mapbox GL, which was essentially the first browser based vector tiles renderer and can probably be considered the market leader today (though with the fork and development lines diverging and becoming incompatible at some point this could change).

- Tangram – originally a project of Mapzen this is the other WebGL renderer that has significant use. Having been started by Mapzen its orientation was a bit less narrowly focused on short term commercial needs but it is essentially a ‘me too’ response of Mapzen to Mapbox without much own ambition in innovation (which was a bit of a general problem of Mapzen).

- Openlayers – the veteran of web map libraries took a very different approach and is now a serious contender in the field of client side map renderers. It uses Canvas rather than WebGL.

For comparison i also show classic Mapnik rendering. This is essentially meant to represent all AGG based renderers (so also Mapserver).

All examples are rendered in a size of 512×512 pixel based on GeoJSON data (in case of the client side renderers) or from a PostGIS database in case of Mapnik.

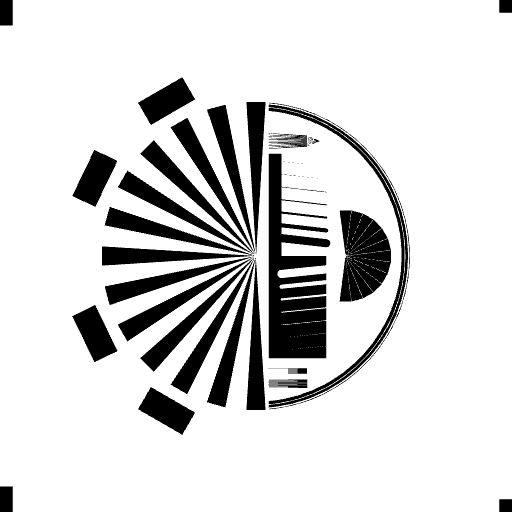

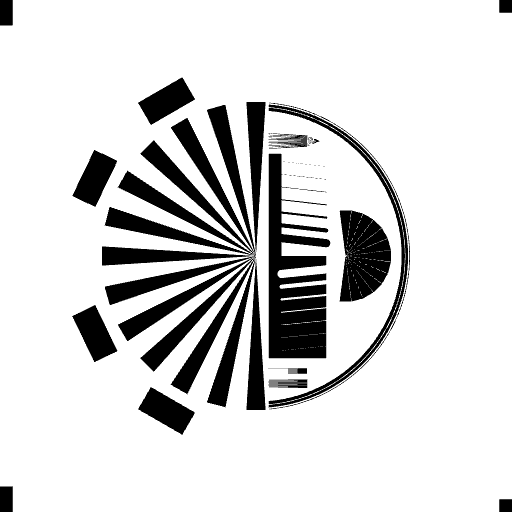

What serves as reference is – as explained in more detail in my previous discussion of polygon rendering a supersampled rendering – the brute force approach to rendering – performing plain rasterization at a much higher resolution (in this case to represent all the fine details in the test pattern i chose 32767×32768 pixel – that is 64×64 supersampling). When you size that down to 512×512 pixel using plain pixel averaging you get the following.

Supersampled rendering using equal weight pixel averaging (box filter)

In terms of general precision and edge acuity this is the gold standard. However plain averaging of the pixels is prone to aliasing artefacts as you can observe in the tests for that. Other filter functions can be used to reduce that – though always at some loss in edge acuity and potentially other artefacts. Here the results with the Mitchell filter function – which massively reduces aliasing at a minor cost in edge acuity.

Box filtered reference (left) in comparison to Mitchell filtered rendering

Test results

Here are the results rendering the test geometries in their original form (as GeoJSON data).

Mapnik

reference (left) in comparison to Mapnik rendering

Mapnik is – as you can expect – fairly close to the reference. That includes similar aliasing characteristics. On the fine comb gradient it is even better since it precisely calculates the pixel fractions covered while supersampling does a discrete approximation.

The main flaw is the coincident edge artefacts you can observe on the tests for that on the lower left. Notice however these only occur with coincident edges between different geometries. Within a single geometry (tests on the upper left) this problem does not manifest (though practically using this as a workaround does likely have performance implications). The coincident edge effect also has an influence on the narrow gaps of the inverse Siemens star on the right. In other words: This does not depend on edges to be exactly coincident, it has an influence to some extent anywhere where several polygons partly cover a pixel.

Maplibre GL

Maplibre GL is interesting in the way that it has an antialias option which is off by default and without which the renderer produces results too ridiculous to show here. So what is shown here are the results with antialias: true.

reference (left) in comparison to Maplibre GL rendering

Even with the antialias option the results are bad. Primarily because of a massive bias in rendering enlarging all polygons by a significant fraction of a pixel. This is visible in particular on the fine comb gradients which are turned completely black and the dent pattern where the narrow gaps on the bottom are invisible while the thin dents on top are more or less indistinguishable in width.

In addition to this bias – which turns many of the test patterns non-functional – diagonal edges show significantly stronger aliasing (stair step artefacts), narrow gaps between polygons are non-continuous in some cases and fairly non-uniform on the circle test.

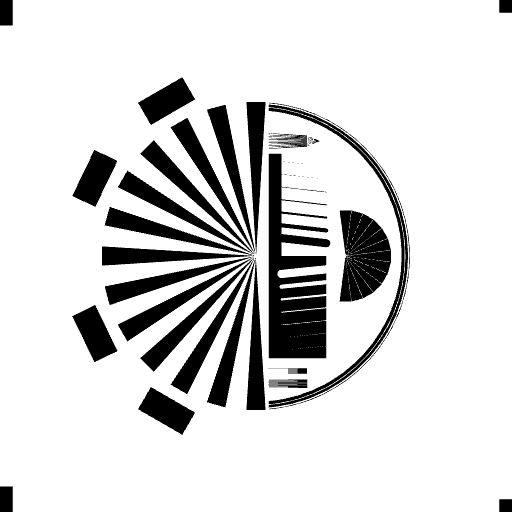

Tangram

reference (left) in comparison to Tangram rendering

Tangram does not have the bias Maplibre GL shows, positive and negative shapes on polygons are rendered in a matching fashion. But it shows similar levels of aliasing which produce a significant amount of high frequency noise even on strait lines and smooth edges like the circle test. Thin lines are rendered proportionally in weight but non-continuously. And the comb gradients produce massive artefacts.

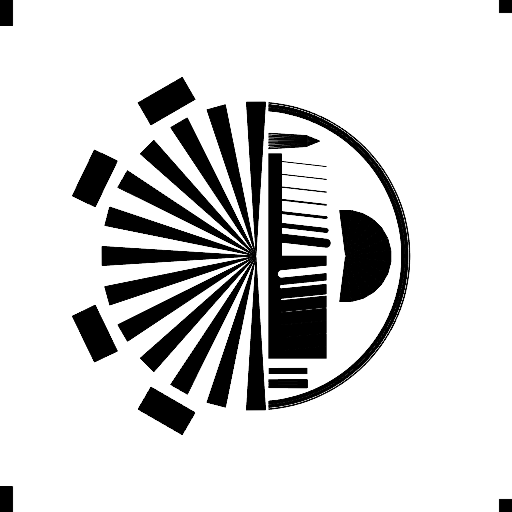

OpenLayers

reference (left) in comparison to Openlayers rendering

OpenLayers is very different in its technical basis of rendering and this shows in the results. In many ways it is the closest to Mapnik and the reference rendering and it shows the least edge aliasing artefacts of all the client side renderers – and accordingly also the clearest representation of the circle test. It however also has its issues, in particular it shows the coincident edge artefacts similar to AGG based renderers (both on the separate and combined geometries), it has issues in proportionally rendering narrow polygon features – including some bias towards the dark (positive) lines compared to the bright (negative) lines.

With actual vector tiles

So far i have shown test results of vector tiles renderers not using actual vector tiles – to look decidedly at the rendering quality and precision and not at anything else. But the question is of course: Do they perform this way, for better or worse, also with actual vector tiles.

The question is a bit tricky to answer because vector tiles are an inherently lossy format of storing geometry data. Coordinates are represented as integers of limited accuracy or in other words: They are rounded to a fixed grid. In addition most vector tile generators seem to inevitably perform a lossy line simplification step before the coordinate discretization and the combination of the two tends to lead to hard to predict results.

The PostGIS ST_AsMVTGeom() function for example (which is meant to be a generic all purpose function to generate geometries for vector tiles from PostGIS data) inevitably performs an ST_Simplify() step without the option to turn that off or adjust its parameters.

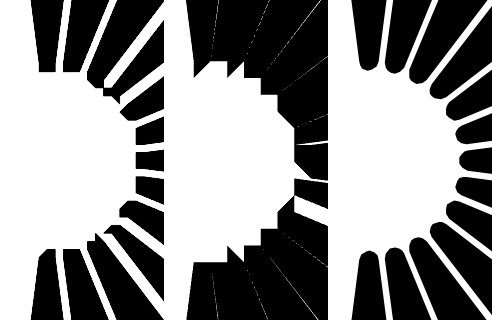

So what i did first for testing if there is any difference between how GeoJSON data and vector tile data is rendered is to generate very high resolution vector tiles (extent 65536) as reference. However as it seems only Openlayers seems to actually work with this kind of high resolution vector tiles directly, both Maplibre GL and Tangram seem to reduce the resolution on the client side (the motivation for which eludes me). Hence evaluation of these renderers is limited to normal low resolution tiles.

Client side coordinate discretization in overzoomed display based on the same vector tile data in Maplibre GL (left) and Tangram (middle) in comparison to Openlayers (right)

Here are examples generated with the PostGIS ST_AsMVTGeom() default extent of 4096 – in comparison to the GeoJSON tests shown above.

Maplibre GL rendering from geoJSON data (left) in comparison to vector tiles data (extent 4096)

Tangram rendering from geoJSON data (left) in comparison to vector tiles data (extent 4096)

Openlayers rendering from geoJSON data (left) in comparison to vector tiles data (extent 4096)

As you can see the differences are small and mostly in those areas where aliasing artefacts are strong already. So the principal characteristics of the renderers are the same when used with vector tiles compared to uncompressed geometry data.

What this however also shows is that vector tiles are an inherently lossy format for geometry representation and that there is no such thing as a safe vector tiles resolution beyond which the results are indistinguishable from the original data or even where the difference does not exceed a certain level. Keep in mind that the test patterns used are primarily rendering tests meant to show rendering performance. They are not specifically intended as test patterns to evaluate lossy vector data compression. Also it seems that the resolution of 4096 steps when generating tiles is pretty much on the high side in practical use of vector tiles and the level of lossy compression using ST_Simplify() performed by ST_AsMVTGeom() is less aggressive than what is typically applied.

I will not go into more detail analyzing vector tiles generation here and how rendering quality is affected by lossy compression of data. Doing so would require having a decent way to separate coordinate discretization from line simplification in the process.

Conclusions

Doing this analysis helped me much better understand some of the quality issues i see in vector tile based maps and what contribution the actual renderer plays in those. With both the lossy data compression in the tiles and the limitations of the rendering playing together it is often hard to practically see where the core issue is when you observe problems in such maps. I hope my selective anaylsis of the actual rendering helps making this clearer also for others – and might create an incentive for you for taking a more in depth look at such subjects yourselves.

In terms of the quality criteria i looked at here, that is primarily precision, resolution and aliasing artefacts in polygon rendering, the tested client side renderers perform somewhere between so-and-so and badly. Openlayers shows the overall best performance. Tangram is on the second place, primarily because of much more noisy results due to aliasing artefacts. Maplibre GL makes the bottom end with a massive bias expanding polygons beyond their actual shape essentially rendering many of the tests useless and making any kind of precision rendering impossible – while being subject to similar levels of aliasing as Tangram.

Do i have a recommendation based on these results? Not really. It would be a bit unrealistic to make an overall recommendation based on a very selective analysis like this. Based on my results i would definitely like to see more examples of practical cartography based on the Openlayers vector tiles rendering engine.

If i should really give a recommendation to people looking to start a map design project and wondering what renderer to choose for that it would be a big warning if you have any kind of ambition regarding cartographic and visual quality of the results to think hard about choosing any of the client side renderers discussed here. Because one thing can be clearly concluded from these evaluations: If we compare these renderers with what is the state of the art in computer graphics these days – both in principle (as illustrated by the reference examples above) and practically (as illustrated by the Mapnik rendering – though it is a stretch to call this state of the art probably) – there is simply no comparison. Is this gap between what is possible technically and what is practically available in client side renderers warranted by engineering constraints (like the resources available on the client)? I doubt it, in particular considering what is behind in the web browser and how resource hungry these renderers are in practical use. But i can’t really tell for sure based on my limited insights into the domain.

September 2, 2021 at 08:24

Hey Chris! Really nice post!

Can you provide the dataset so I can try it with another renderer and see if I can improve the rendering side of it? I’d love to try.

Thanks!

September 2, 2021 at 20:52

Thanks.

Test patterns are available on:

https://github.com/imagico/testpatterns

Pingback: Primeros pasos con MapLibre: fork de código abierto de Mapbox GL JS

October 3, 2023 at 14:33

maybe it is just me, but I do not see images for specific renderings and comparisons with reference

October 3, 2023 at 16:13

I noticed that occasionally the before-after slider widget is not working – but it was hard to pinpoint because once you turn on the developer console in the browser it always works (indicating a timing issue). I switched to a different wordpress plugin for this post now which does not appear to suffer from the same issue. Please let me know if there are still problems.

April 21, 2024 at 20:18

Thanks for the hint, you should mention it on top of first slider. I had to use the trick on Firefox 125.0.1 5mint variant).

BTW impressive work, thanks!